AI Doesn't Fix Misalignment. It Hides It.

These tools seem to solve a familiar Jira problem: turning vague tickets into something actionable. The catch is that polished output can make teams feel aligned before they actually are.

The Genie Problem

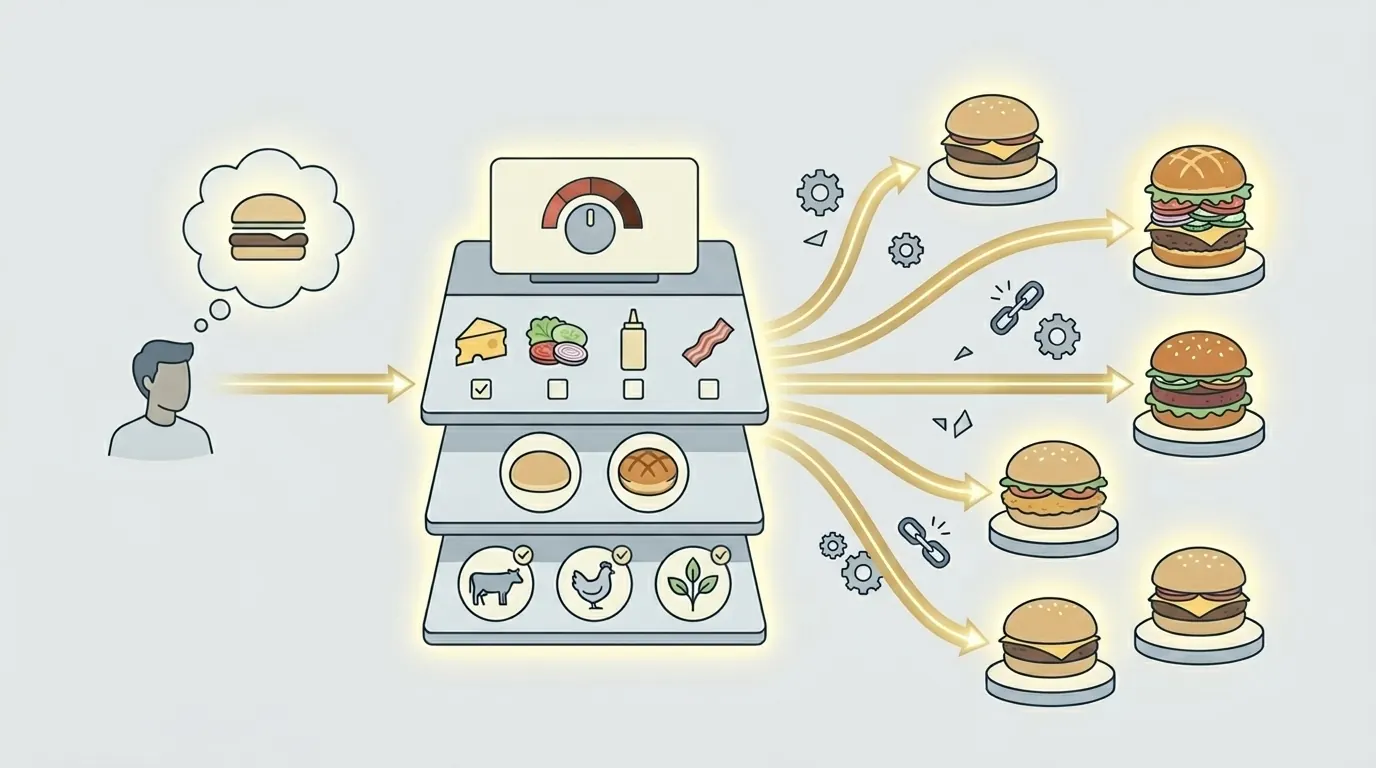

There is a mental model I keep coming back to when I think about AI. AI is a genie. It does exactly what you say, not what you mean. The distance between those two things is where a surprising amount of software delivery falls apart.

You know the situation. A product manager is juggling four things at once and writes a Jira ticket in two minutes. The ticket is not maliciously bad. It is vague, rushed, incomplete, and full of assumptions that never got said out loud.

Engineering picks it up, builds something reasonable from the words that exist, and two weeks later everybody is in a review saying some version of: that is not what I meant.

Nobody was lying. Nobody was lazy. The vision just never became explicit enough before the work began. The AI version of this problem is even harsher, because the model has no ambient team context to fill in the blanks. If the ticket carries almost no context, the genie is working from almost nothing.

The Weakest Artifact

Think about where the real knowledge of a product actually lives. Your codebase knows your architecture, your naming, your dependencies, and your implementation boundaries. Your design files know your visual language, interaction patterns, and the decisions you have repeated enough to become a system. Your previous tickets and docs know your team's vocabulary and the way you usually frame trade-offs.

A Jira ticket knows almost none of that. It is often the least context-rich artifact in the whole stack. So when a team pastes a ticket into an AI tool and expects high-quality output, it is effectively asking for a great answer from the least informative input available.

That is why the output so often sounds plausible but fits badly. Acceptance criteria assume the wrong user. Design ideas drift into a visual style you would never ship. Technical recommendations ignore your stack, your deployment model, or the shortcuts your team intentionally does not take. The AI is not failing. It is doing the best it can with a terrible briefing.

Confident Nonsense

Most AI tools for Jira follow the same shape. Open an issue. Click a button. Get a description, some acceptance criteria, maybe a breakdown into subtasks. The experience feels productive because the result is structured, neat, and fast.

It usually looks like this:

- open the issue;

- click the button;

- get a clean block of text, criteria, and maybe subtasks.

But structure is not the same thing as alignment. If the input was vague and context-free, the output is just a more confident-looking version of the same ambiguity. In practice, the tool may have made the problem worse, because the polished formatting now discourages people from questioning the assumptions before delivery starts.

This is the quiet danger: garbage in, garbage out — except now the garbage has headings, bullets, and the tone of certainty. A team can walk into implementation with more confidence and less clarity at exactly the same time.

What AI Actually Needs

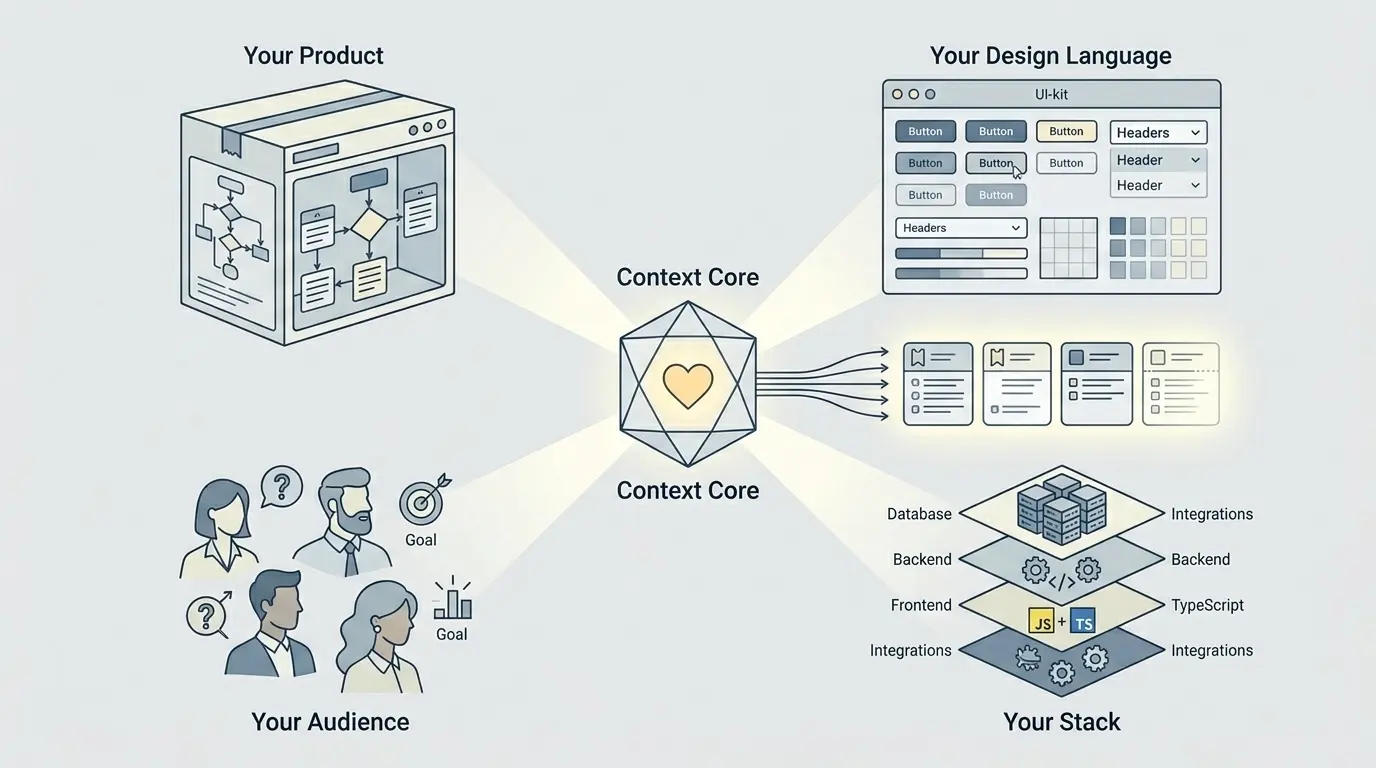

Before AI can produce something genuinely helpful for a Jira issue, it needs to understand four things about the world it is working in:

- Your product — what it does, what outcomes matter, and what the feature is supposed to improve for users and for the business.

- Your design language — the visual patterns, UI kit, and interaction habits that make the output feel like your product instead of a generic startup demo.

- Your audience — who these users are, what they need, what they expect, and what they do not know. This changes wording, interaction design, and edge cases in almost every feature.

- Your stack — real frameworks, runtime boundaries, integrations, data constraints, and technical limitations that should shape what is even considered a valid suggestion.

The interesting part is that most teams already have all of this. It exists in code, docs, mocks, and team memory. It just does not exist in Jira in a form the AI can see. So tools that look only at Jira are blind to the most important context in the project.

If you want the practical version of that context layer, Your AI Is Guessing Your Product breaks down what to store and how to reuse it.

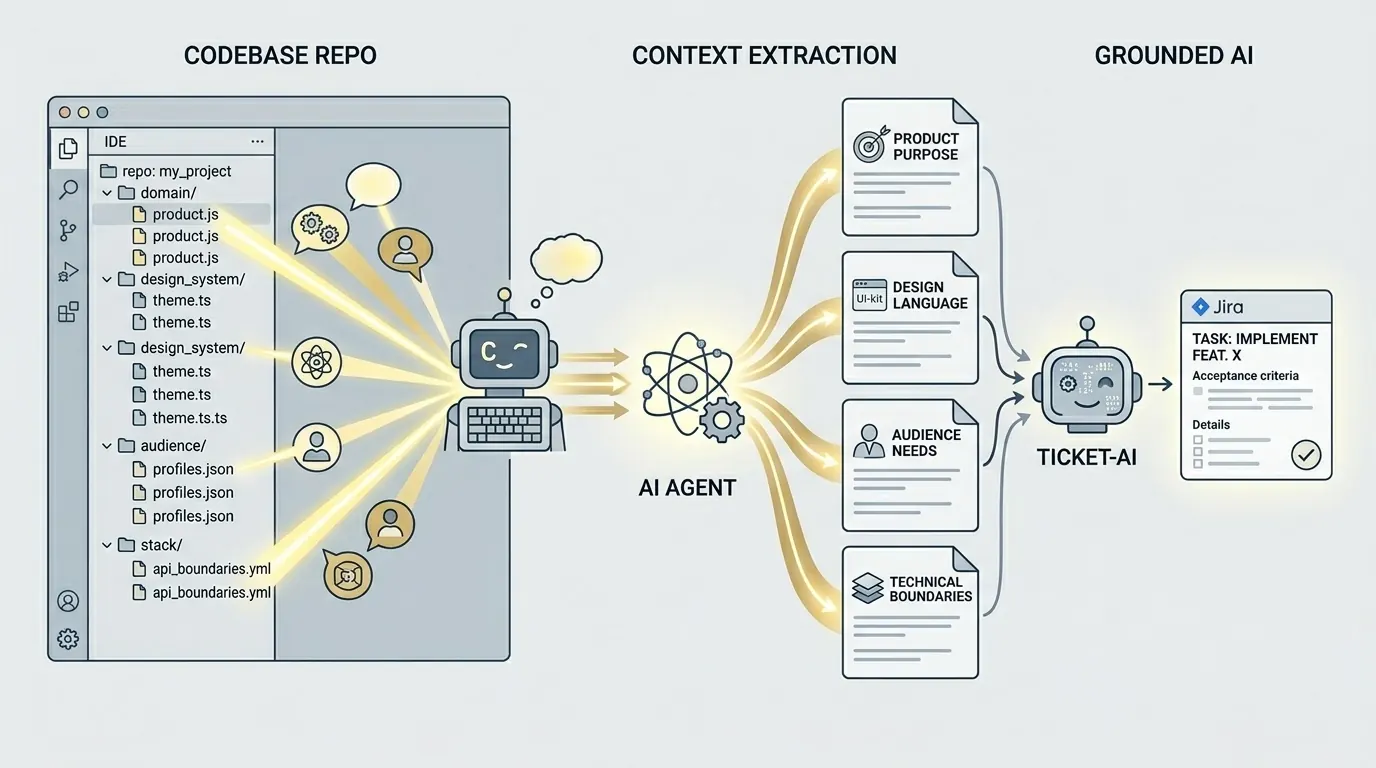

Your Code Knows More

One of the genuinely exciting things about current coding agents is that they are very good at reading a repository and turning it into plain-language context. You can point Claude Code, Codex, or another capable coding agent at your repo and ask for a markdown summary of product purpose, stack, implementation boundaries, known gaps, and business signals. It takes minutes, not a week-long documentation project.

That changes the equation. Instead of asking AI to generate from a two-sentence ticket in a vacuum, you can give it a grounded summary of the product, the design system, the audience, and the stack. Suddenly the model is not improvising in the dark. It is reasoning inside a world that actually resembles your project.

Your codebase has been holding the answers all along. Component names expose design language. Domain models reveal how the product thinks. Integrations and libraries explain technical boundaries. You do not need to invent context from scratch. You need a way to extract it into a format another AI can reliably use.

Hidden Decisions

Even with excellent project context, there is still a second problem AI cannot solve by guessing: the decisions that no one has made yet. Every Jira issue carries hidden assumptions about permissions, rollout rules, edge cases, backwards compatibility, interaction details, and what success actually looks like when the feature meets real users.

Those decisions do not disappear when a ticket gets picked up. They simply resurface mid-sprint, which is the most expensive possible time to notice them. A designer asks which existing screen is the reference point. An engineer needs to know whether there is already an API for this flow. Somebody realizes the acceptance criteria assumed logged-in users, while half the experience is anonymous. None of this is surprising in hindsight. It was always there.

That is why context alone is not enough. You also need questions — specific questions, grounded in the ticket and your actual product context together. Should this apply to existing users or only new ones? What happens if the browser closes mid-flow? Is the feature for admins only? Is this a one-time action or a repeated behavior? A short burst of honest answers creates more alignment than another polished spec ever will.

How Just Works

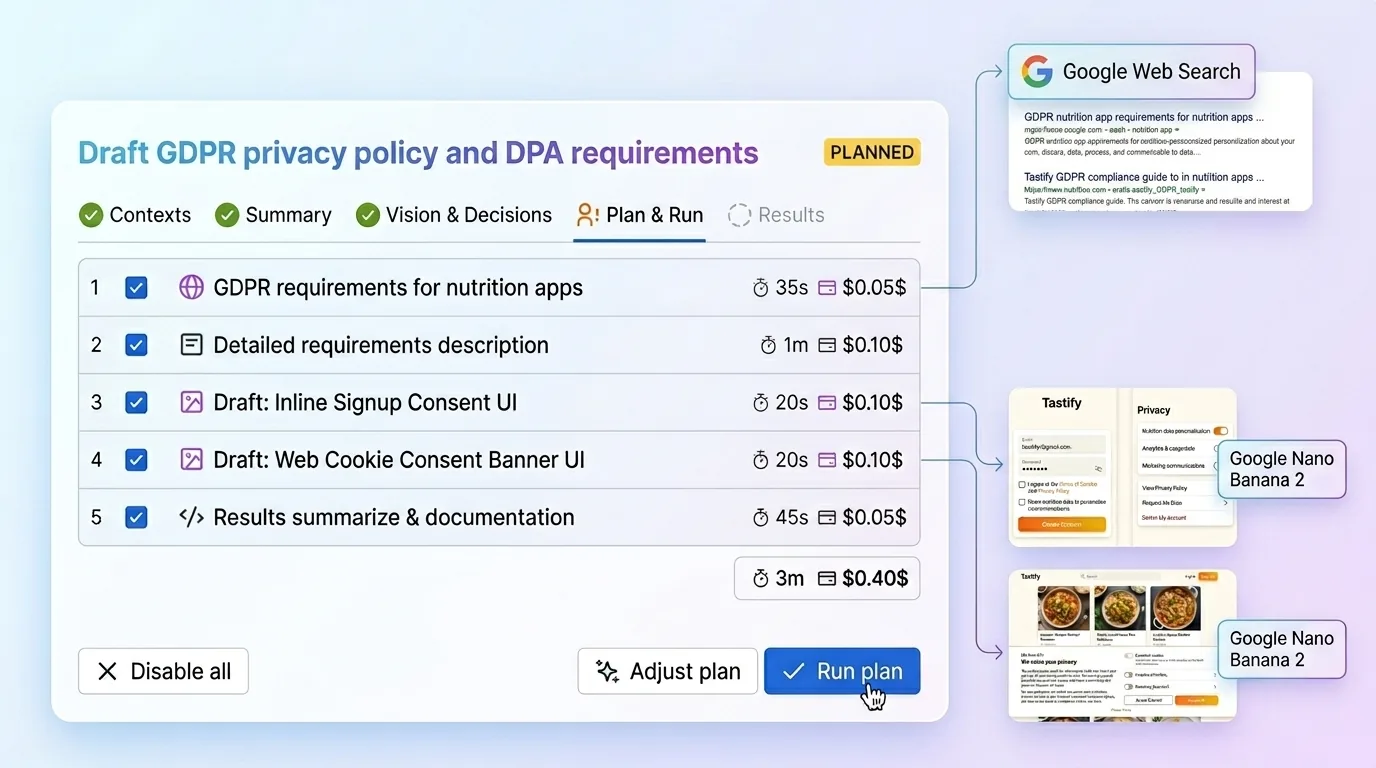

This is the workflow I built into Just: AI Assistant for Jira:

- Set project context once through four structured fields: product summary, design system, audience, and stack. Just gives you prompts you can run through Claude Code or any coding agent to generate those summaries from your repository. You paste the results in once, and that context gets reused across future tickets.

- Open a Jira issue. No prompt engineering, no extra setup per task. Just reads the issue together with your stored context and surfaces insights that are actually shaped by your project. From there it asks clarifying questions that are not generic — they are grounded in your product, your tech reality, and the design language your team already works with.

- Build the plan. Just turns those answers into requirements, design direction, edge cases, expected outcomes, and a structured execution path the team can work from. It can also pull in fresh web context where that matters, and it works with all five major AI providers so you can use the best model for each step. The point is not to feel magical. The point is to help the team generate the right thing, not just something fast. You can see the full flow at aiapps.me.

What This Changes

So why do most AI tools for Jira make the alignment problem worse instead of better? Because they usually step in after ambiguity is already inside the ticket, then make that ambiguity look polished and complete.

The real leverage is earlier. Be clear about what you actually want, surface the hidden decisions before implementation starts, and give AI enough context to work inside the real shape of your product instead of guessing from a context-poor brief.

That is the whole point. If the team understands what it wants before the work begins, AI becomes useful. If it does not, the output may still look impressive — but you will probably pay for that confusion later in rework.